Your AI agents need an operating model, not a roadmap

Most teams add AI agents before they have an operating model. Here's the ownership, approval, and governance framework to build first.

The bottleneck in most marketing organizations right now is not tool access. It is the absence of a working model for what happens after the tool connects.

Over the past several months, a consistent pattern has appeared across ecommerce, paid media, social, and revenue workflows: AI is no longer sitting at the edge of marketing operations as an assistant. It is being positioned at the execution layer, handling campaign strategy, targeting decisions, audience segmentation, and live budget pacing.

Tools like BiteSpeed's AI Marketer, Omnisend's MCP integration with ChatGPT, and Meta's new ads AI connectors are not productivity features bolted onto existing workflows. They are execution surfaces, and they require a different kind of organizational readiness than anything marketing teams have dealt with before.

The teams that will extract real value from this shift are not necessarily the ones with the most advanced tooling. They are the ones with clear ownership structures, defined approval thresholds, and data foundations that can actually support autonomous decision-making.

That is the operating model. And most teams do not have one yet.

Table of contents

Jump to each section:

- Why agentic marketing is now an operations problem, not a novelty story

- The three execution modes: draft-only, approval-gated, and auto-executed

- How to assign ownership across ops, channel teams, and leadership

- What data, brand, and audit controls must exist before rollout

- A 90-day pilot structure for testing agentic workflows safely

- What your team structure looks like after the pilot

Why agentic marketing is now an operations problem, not a novelty story

Adoption numbers have crossed a threshold that makes this conversation urgent rather than speculative. According to a May 2025 PwC survey of 300 senior executives, 52% say AI agents are broadly or fully adopted across their organization, and 88% plan to increase AI-related budgets specifically to fund agentic AI initiatives. Sales and marketing is already the second-most-common business function for AI agent deployment, sitting at 20% of organizations surveyed.

That adoption curve is arriving faster than most governance structures can keep up with. Gartner projects that 33% of enterprise software applications will incorporate agentic AI by 2028, up from less than 1% in 2024. The implication is not just that more tools will have AI features. It is that those features will increasingly make consequential decisions on their own, whether or not the team has decided who owns the consequences.

This is the core operations problem: the tools are moving faster than the permission structures. BiteSpeed's AI Marketer, for example, coordinates six specialized agents trained on more than 100,000 campaigns to run campaign strategy, targeting, creative production, execution, and analysis across the customer lifecycle. That is an enormous scope.

When the agent is wrong about a discount threshold or fires a winback campaign at the wrong segment, the question immediately becomes: who knew this was running, who approved the parameters, and who owns the override?

Omnisend's MCP surfaces a similar challenge from a different angle. Compressing the loop between insight and execution into a single chat interface is genuinely powerful for speed-to-action. But the interface compression that makes it efficient also makes it easy to skip the review steps that would normally slow down a questionable decision. Ease of execution and quality of governance are in tension by design.

The teams most at risk right now are the ones treating agentic AI adoption as a vendor evaluation problem. The real work is organizational.

The three execution modes: draft-only, approval-gated, and auto-executed

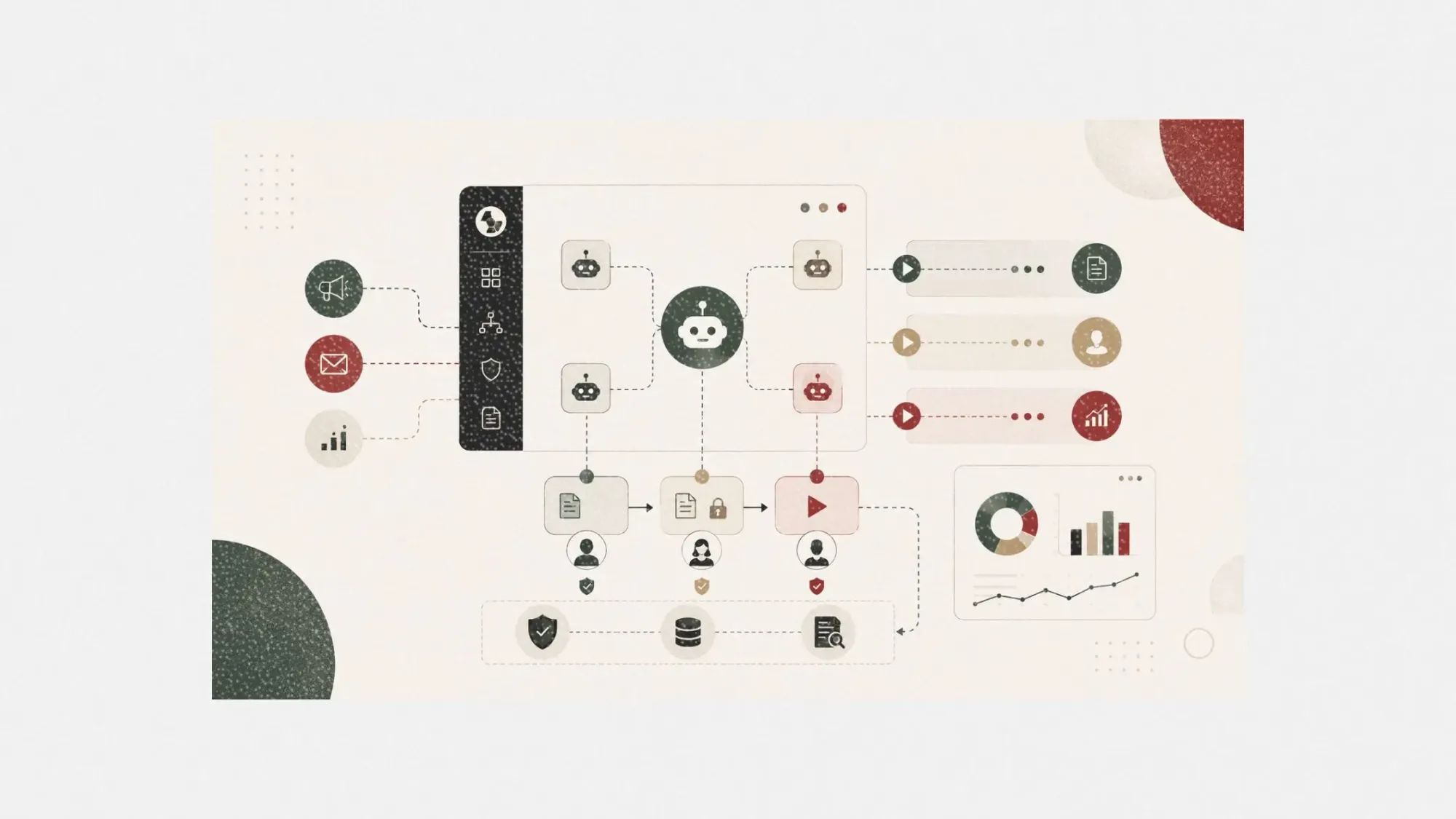

Before a single workflow gets automated, teams need a classification system for what kind of automation any given task is eligible for. The simplest useful framework has three modes.

Draft-only means the AI generates an output, but a human reviews and publishes it. No live system is touched. This is the right mode for anything with brand sensitivity, legal exposure, or high creative variance.

Brand voice responses on social, personalized outreach copy, crisis-adjacent messaging, and high-value customer communications all belong here regardless of how confident the AI seems.

Approval-gated means the AI identifies the action and prepares it, but a named human (or team) must explicitly approve before execution. This is the right mode for anything with financial impact, audience scope at scale, or channel-specific risk.

Discount offers, large send volumes, budget reallocation, and high-priority lifecycle triggers (winback, lapse, churn prevention) should run through an approval gate. The approval step does not have to be slow. A well-structured workflow can route a notification to the right person within minutes. What matters is that someone with accountability reviewed it.

Auto-executed means the AI acts directly within defined parameters. This is appropriate only for tasks that are low-risk, easily reversible, and tightly scoped. Replenishment reminders for consumable products, time-zone-based send-time optimization, reporting pulls, and audience refresh for stable segment definitions are reasonable candidates. The key question before assigning a task to auto-execution is: if this fires incorrectly at full scale, what is the damage, and how quickly can it be reversed?

Classifying use cases by risk and reversibility is the foundational decision that everything else depends on. Teams that skip this step and hand off a broad category of work to an agent without mode assignment end up discovering the classification problem after something goes wrong. That is a far more expensive place to learn it.

How to assign ownership across ops, channel teams, and leadership

Ownership in an agentic workflow is not just about who uses the tool. It is about who is accountable when the tool makes a decision, and who has the authority to override it.

A functional ownership model needs three distinct layers.

Workflow owners are the people, usually marketing ops or CRM leads, who define the parameters and logic for each automation. They determine what triggers an agent action, what the acceptable output range looks like, and what conditions should pause or escalate the workflow. This is a design role as much as an operational one. The workflow owner is responsible for the quality of the guardrails, not just the existence of them.

Channel approvers are the practitioners who sit in the approval-gated layer for their specific domain. A lifecycle marketer owns approval for email and SMS campaigns above a certain send volume. A paid media lead owns approval for budget reallocations above a threshold. A social ops manager owns approval for automated responses that fall outside the pre-cleared response set. These approvers need to be named, reachable within a defined window, and backed by a secondary approver for time-sensitive decisions.

Executive oversight covers the decisions that span functions: AI budget allocation, new agent deployment, changes to brand voice or pricing logic baked into automation, and any post-incident review. This layer does not need to be in the day-to-day loop, but it needs to exist and be available when an automated decision produces a result that requires judgment above the channel level.

One structural point that matters more than the model: ownership cannot be assigned to a team. It must be assigned to a named individual. When a $40,000 discount campaign fires erroneously because a discount threshold parameter was set too broadly, "the lifecycle team" did not own it. Someone specific did, and the absence of that specificity is usually what causes the incident to repeat.

What data, brand, and audit controls must exist before rollout

The failure rate for AI marketing initiatives is high, and the cause is almost never the AI model itself. Gartner projects that through 2026, 60% of AI projects will be abandoned due to inadequate data foundations. Forrester stated directly in 2024 that "data quality is now the primary factor limiting B2B GenAI adoption," ranking above model accuracy, compute, and talent. The RAND Corporation's 2025 analysis of enterprise AI initiatives found that 80.3% fail to deliver the business value promised, and RAND's breakdown of the projects that did succeed found that data domains had been cleaned before the project started in nearly every case.

For marketing teams, this translates into three concrete pre-conditions.

Data readiness means your customer records, segment definitions, and behavioral event data are connected, deduplicated, and accurate before any agent touches them. Only 16% of RevOps professionals report trusting their own data accuracy, and that figure should be treated as a warning signal rather than an industry norm. An agent that runs lifecycle campaigns on top of a CRM with duplicate records and misattributed events will produce confident-looking automation on corrupted inputs. The cleanup has to come first.

Brand and content controls mean the agent has access to an approved content library, a defined set of voice guidelines, and locked parameters for anything that affects brand tone, pricing, or promise. The agent is only as governed as the constraints you build around it. Leaving creative parameters open-ended and hoping the model stays on-brand is a governance failure, not a feature of the tool.

Audit and override logs mean every automated action is recorded with a timestamp, the parameters that triggered it, and the outcome. This is not optional if you want to diagnose failures, demonstrate compliance, or build institutional knowledge about what the agent has done over time.

The override log is particularly important: when a human overrides an automated decision, that event should be captured and reviewed, because override patterns are often the earliest signal that a parameter is wrong before a larger incident surfaces.

Meta's AI connectors for third-party tools are a useful illustration here. The connectors create real opportunity for agencies and martech vendors to build optimization logic on top of Meta's campaign layer. But as the ContentGrip author noted, access alone does not fix messy ownership, guardrails, or success metrics. The connectors are infrastructure. The governance has to come from the team deploying them.

A 90-day pilot structure for testing agentic workflows safely

A phased pilot creates learning checkpoints without committing the full stack to automation before the governance model is proven.

Days 1 to 30: scope and classify. Pick one marketing function, not one tool. Choose a scope where the data is cleanest, the use cases are lowest-risk, and the team has the most operational clarity. Replenishment campaigns in lifecycle, or reporting automation in paid media, are good starting points.

Document every use case you are considering and run each one through the execution mode classification. Assign workflow owners and approvers by name. Do not enable any auto-execution during this phase.

Days 31 to 60: run approval-gated. Enable the AI to prepare and recommend actions, but keep all execution behind an approval step. This is the phase where you learn whether the agent's recommendations match your team's judgment, whether the parameters are producing reasonable outputs, and whether the approval workflow is actually functional at the volume the agent generates.

Track every approval, every modification, and every rejection. The modification and rejection patterns are your tuning data.

Days 61 to 90: selective auto-execution. Move only the use cases with the cleanest approval records and lowest modification rates into auto-execution. Keep everything else in the approval-gated mode. Set explicit performance thresholds that will trigger a pause and review, and make sure the override log is being reviewed weekly, not just stored.

At the end of 90 days, you have a documented record of what the agent did, how often humans intervened, what the intervention patterns looked like, and whether the data quality held up under real operational conditions. That record is what justifies expanding scope, not the vendor's case study.

What your team structure looks like after the pilot

The teams that navigate agentic AI well do not look like teams that automated a bunch of tasks. They look like teams that redesigned how accountability works.

The workflow owner role, whoever holds it, becomes one of the more strategically consequential positions in marketing operations. They are responsible for parameter design, which is the decision layer that determines what the agent will do at scale. That is a job that requires deep channel expertise, judgment about brand risk, and enough technical fluency to translate business logic into automation parameters. It is not a coordinator role.

Approval structures get faster over time, not slower, because the agent's track record narrows the set of decisions that need human review. The long-term goal is not to approve everything the AI does. It is to get the approval-gated category small enough that the decisions landing there are genuinely consequential enough to warrant human attention.

What does not change, regardless of how mature the automation becomes: someone needs to own the outcomes. The agent is not a named accountable party. The human who set the parameters is. Building that clarity into team structure now, before the tools scale, is the work that determines whether agentic marketing becomes a durable operational advantage or an expensive course of troubleshooting.

Tool access is not the differentiator. Operating model is.