X and TikTok face regulatory heat in Singapore

IMDA flags social media safety gaps in 2025 report

Singapore’s regulator has put X and TikTok under intensified scrutiny, signaling a broader shift in how governments are holding platforms accountable for harmful content.

For marketers, this is not just a policy update, it is a reminder that platform risk, brand safety, and audience trust are becoming deeply intertwined.

This article explores what triggered the action from Singapore’s Infocomm Media Development Authority (IMDA), how major platforms stack up on safety, and what this means for marketers navigating an increasingly regulated social media landscape.

Short on time?

Here’s a table of contents for quick access:

- Why Singapore flagged X and TikTok over harmful content

- How social platforms performed in IMDA’s 2025 safety assessment

- Why this signals a global shift in social media regulation

- What marketers should know about platform risk and brand safety

Why Singapore flagged X and TikTok over harmful content

Singapore’s IMDA has placed X and TikTok under enhanced supervision after identifying “serious weaknesses” in their ability to detect and remove harmful content.

The findings are stark. Cases of child sexual exploitation material on X more than doubled from 33 in 2024 to 73 in 2025. On TikTok, 17 cases of terrorism-related content surfaced from Singapore-based accounts for the first time.

More concerning is how the content was handled. Both platforms only removed flagged material after IMDA intervened, pointing to gaps in proactive detection systems. In TikTok’s case, some user-reported terrorism content was initially deemed compliant with platform guidelines, raising questions about moderation accuracy.

As a result, both platforms must now submit progress updates and demonstrate improvements in their next safety reports by June 2026. Failure could lead to penalties of up to SG$1 million under the Broadcasting Act.

How social platforms performed in IMDA's 2025 safety assessment

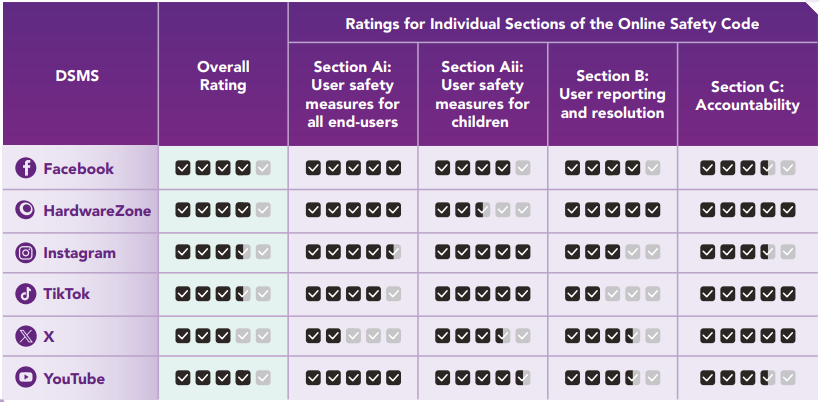

The broader Online Safety Assessment Report 2025 evaluated six major platforms: Facebook, HardwareZone, Instagram, TikTok, X, and YouTube.

Key takeaways:

- Top performers: Facebook, YouTube, and HardwareZone received the highest overall ratings, though gaps in child safety remain

- Strong coverage: Instagram and TikTok were noted for having relatively comprehensive safety measures

- Weakest performer: X ranked lowest overall, particularly due to poor proactive detection of harmful content

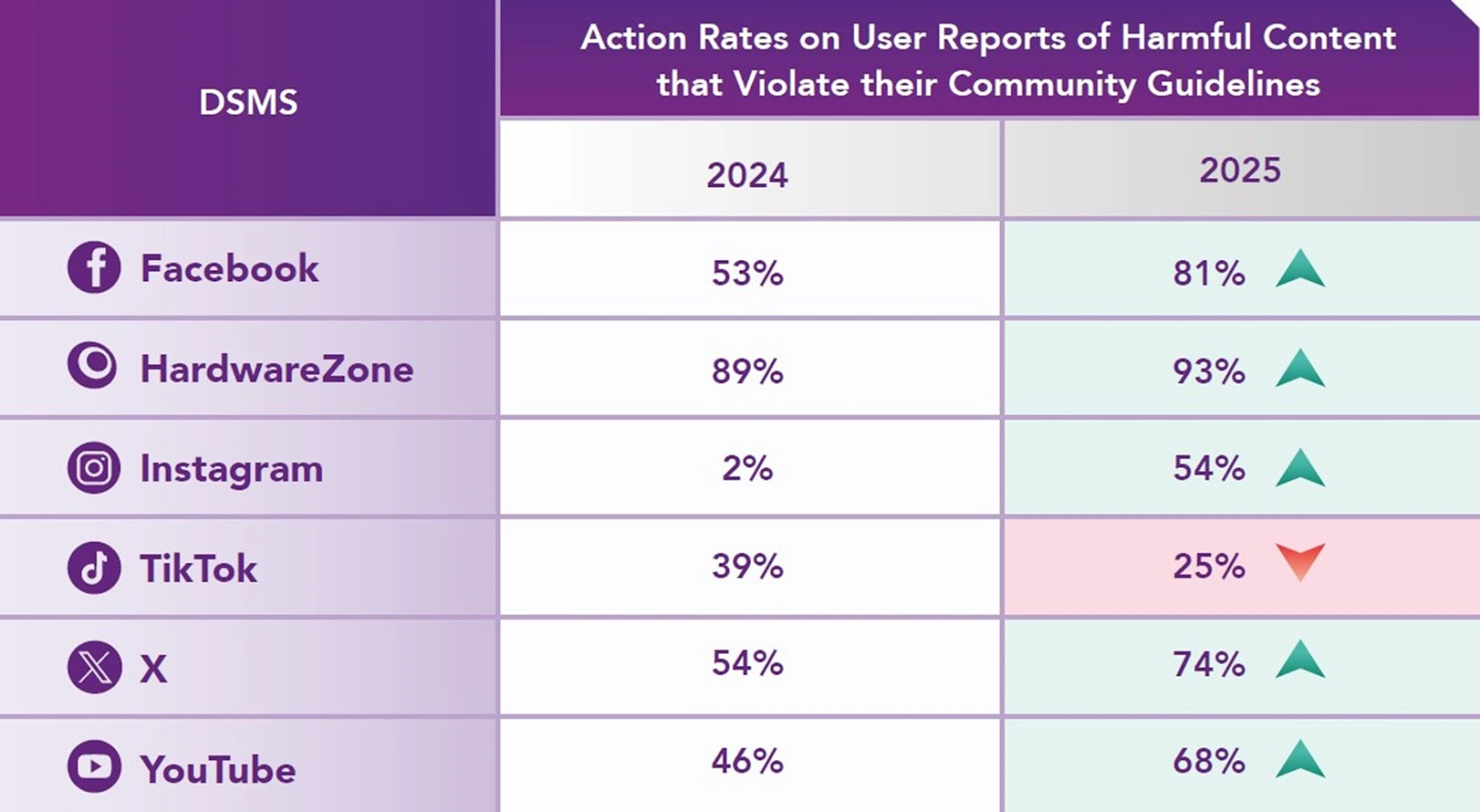

- Reporting gaps: TikTok’s action rate on reported harmful content dropped sharply from 39% to 25%

There is some progress. Average response times improved across all platforms. Facebook reduced its action time from 9 days to 4 days, while X cut its response time from 10 days to 5 days.

Still, faster response does not necessarily mean better prevention. The report highlights that proactive detection and accurate classification remain weak points across the ecosystem.

Why this signals a global shift in social media regulation

Singapore’s move is part of a larger global trend toward stricter platform accountability, especially around child safety and harmful content.

Authorities are now exploring additional restrictions, including:

- Limiting direct messaging features for minors

- Restricting autoplay and infinite scroll mechanics

- Introducing stronger age assurance requirements

These discussions mirror actions in other markets. Australia has already introduced restrictions on social media use for users under 16. In the US, courts are increasingly holding platforms accountable for addictive design and child safety failures.

The direction is clear. Governments are no longer relying solely on platform self-regulation. Instead, they are actively shaping how social media products function, particularly for younger users.

What marketers should know about platform risk and brand safety

For marketers, this is more than a compliance story. It directly affects where and how brands show up.

Here are the key implications:

1. Platform risk is rising

Regulatory scrutiny can lead to sudden policy changes, feature restrictions, or reputational damage. Brands heavily reliant on a single platform face higher exposure.

2. Brand safety is evolving beyond content adjacency

It is no longer just about avoiding unsafe content placements. It is about aligning with platforms that demonstrate responsible moderation and governance.

3. User trust is becoming a competitive advantage

Platforms that can prove safer environments may attract more engaged and higher-quality audiences over time.

4. AI moderation is under the microscope

Both X and TikTok have committed to improving automated detection. Marketers should expect more transparency demands around how AI systems moderate content.

5. Diversification is no longer optional

Relying on one or two major platforms is increasingly risky. A diversified channel strategy across owned, paid, and emerging platforms is critical.

Singapore’s action against X and TikTok highlights a turning point in platform accountability. Faster moderation is not enough. Regulators now expect proactive detection, accurate classification, and measurable improvements.

For marketers, the takeaway is clear. Platform selection is no longer just about reach and performance. It is about risk, trust, and long-term sustainability. As regulation tightens globally, brands that stay agile, diversify their channels, and prioritize safe environments will be better positioned to adapt.